O frabjous day! Callooh! Callay! ONTAP 8.3 is out, and with it, the long promised demise of the dedicated root aggregate for lower end systems!

To re-cap – NetApp has always said – have a dedicated root aggregate. But until Clustered ONTAP, that was more of a recommendation, like, say, brush your teeth morning, noon and night. When you only have 24 drives in a system, throwing away 6 of them to boot the thing seems like a silly idea. The lower-end (FAS2xxx) systems represent a very large number of NetApp’s sales by controller count, and for these systems, Clustered ONTAP was not a great move because of it. With 8.3 being Clustered ONTAP only, there had to be a solution to this pretty serious and valid objection, and there is – Advanced Disk Partitioning (ADP).

What is ADP? Basically it’s partitioning drives, and being able to assign partitions to RAID groups and aggregates. Cool, right? Well, yes, mostly. ADP can be used on All-Flash-FAS (AFF), but that is out of scope for this post. There are some important things to be aware of for these lower end systems.

- Systems using ADP need an ADP formatted spare, and then non-ADP spares for any other drives

- ADP can only be used for internal drives on a FAS2[2,5]xx system

- ADP drives can only be part of a RAID group of ADP drives

- SSD’s can now be pooled between controllers!

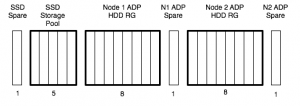

If a system is only using the internal drives, chances are, it is going to be a smaller system, and most of these don’t matter. The issue comes when it is time to add a disk shelf. Consider the following ADP layout system, assuming one data aggregate per controller:

If we were to add a shelf of 24 disks, and split it evenly between controllers, we would need to do some thinking first. We can’t add it to the ADP RG, and we need a non-ADP spare, for each controller. With ADP, and our 42 (18+24) SAS drives (21 per controller), we have used them like this:

- N1_aggr0

- N1_aggr1_rg0 – 6 data, 2 parity

- N1_aggr1_rg1 – 9 data, 2 parity

- N1 ADP Spare – 1

- N1 Non ADP Spare – 1

- N2_aggr0

- N2_aggr1_rg0 – 6 data, 2 parity

- N2_aggr1_rg1 – 9 data, 2 parity

- N2 ADP Spare – 1

- N2 Non ADP Spare – 1

For a total of:

- 8 parity

- 4 spare

- 30 data

If we didn’t use ADP, we’d be using them like this:

- N1_aggr0 – 1 root, 2 parity

- N1_aggr1_rg0 – 15 data, 2 parity

- N1 Non ADP Spare – 1

- N2_aggr0 – 1 root, 2 parity

- N2_aggr1_rg0 – 15 data, 2 parity

- N2 Non ADP Spare – 1

For a total of:

- 8 parity

- 4 spare

- … annnd 30 data

I toyed with running the numbers on moving the SSD drives to the shelf, meaning we could have larger ADP partitions used in RAID groups, but that still bites you in the end, as you will end up with the same number of RAID groups, but less balanced sizes as more shelves are added.

If we move to 2 shelves – 66 (18+24+24) SAS drives, we could use them like this with ADP:

- N1_aggr0

- N1_aggr1_rg0 – 6 data, 2 parity

- N1_aggr1_rg1 – 9 data, 2 parity

- N1_aggr1_rg2 – 10 data, 2 parity

- N1 ADP Spare – 1

- N1 Non ADP Spare – 1

- N2_aggr0

- N2_aggr1_rg0 – 6 data, 2 parity

- N2_aggr1_rg1 – 9 data, 2 parity

- N2_aggr1_rg2 – 10 data, 2 parity

- N2 ADP Spare – 1

- N2 Non ADP Spare – 1

For a total of:

- 12 parity

- 4 spare

- 50 data

Or this without ADP:

- N1_aggr0 – 1 root, 2 parity

- N1_aggr1_rg0 – 15 data, 2 parity

- N1_aggr1_rg1 – 10 data, 2 parity

- N1 Non ADP Spare – 1

- N2_aggr0 – 1 root, 2 parity

- N2_aggr1_rg0 – 15 data, 2 parity

- N2_aggr1_rg1 – 10 data, 2 parity

- N2 Non ADP Spare – 1

For a total of:

- 12 parity

- 50 data

- 2 spare

At 3 shelves, the story changes.. 90 (18+24+24+24) SAS drives, we could use them like this with ADP:

- N1_aggr0

- N1_aggr1_rg0 – 6 data, 2 parity

- N1_aggr1_rg1 – 9 data, 2 parity

- N1_aggr1_rg2 – 10 data, 2 parity

- N1_aggr1_rg3 – 10 data, 2 parity

- N1 ADP Spare – 1

- N1 Non ADP Spare – 1

- N2_aggr0

- N2_aggr1_rg0 – 6 data, 2 parity

- N2_aggr1_rg1 – 9 data, 2 parity

- N2_aggr1_rg2 – 10 data, 2 parity

- N2_aggr1_rg3 – 10 data, 2 parity

- N2 ADP Spare – 1

- N2 Non ADP Spare – 1

For a total of:

- 16 parity

- 4 spare

- 70 data

Or this without ADP:

- N1_aggr0 – 1 root, 2 parity

- N1_aggr1_rg0 – 19 data, 2 parity

- N1_aggr1_rg1 – 18 data, 2 parity

- N1 Non ADP Spare – 1

- N2_aggr0 – 1 root, 2 parity

- N2_aggr1_rg0 – 19 data, 2 parity

- N2_aggr1_rg1 – 18 data, 2 parity

- N2 Non ADP Spare – 1

For a total of:

- 12 parity

- 74 data

- 2 spare

So, a couple of conclusions:

- ADP is good for internal shelf only systems

- ADP is neutral for 1 or 2 shelf systems

- ADP is bad for 3+ shelf systems

- ADP is awesome for Flashpools (not really a conclusion from this post, but trust me on it? 😉

As a footnote: savvy readers will notice I’ve got unequally sized RAID groups in some of these configs. With ONTAP 8.3, the Physical Storage Management Guide (page 107) now says:

All RAID groups in an aggregate should have a similar number of disks. The RAID groups do not have to be exactly the same size, but you should avoid having any RAID group that is less than one half the size of other RAID groups in the same aggregate when possible.

This is in comparison to ONTAP 8.2 Physical Storage Management Guide (page 91) which says:

All RAID groups in an aggregate should have the same number of disks. If this is impossible, any RAID group with fewer disks should have only one less disk than the largest RAID group.

Why you not using maximum RG size?

20 (18+2) for SATA/NL-SAS

28 (26+2) for SAS/SSD

In small configuration there is no reason to divide small number of disks between two controllers. It is more reasonable in this situation to have active-passive configuration, as active controller newer will be fully loaded because of small amount of disks.

I agree – in general now we can do a true active/passive config, except, as you pointed out, for NL-SAS and SATA (<=4TB, 6TB+ RG is limited to 14 drives). In the case of these ones, it will probably be better to divide the workload to active/active, to enable the most efficient use of ADP'ed drives.

In TR-3437 (July, 2013 ) page 12 it is not 100% clear, but it looks like NetApp recommends 20 disks in RG for disks “3TB, 4TB, and larger”.

Can you pleas provide the link or the name of the document mentioned that SATA/NL-SAS disks greater then 3TB must be configured with RG size no more then 14 disks?

In Clustered Data ONTAP® 8.2 Physical Storage Management Guide written on page 117, that max RAID-DP RG Size for SATA/BSAS/FSAS/MSATA/ATA: 20

At last I have found the answer at HWU.netapp.com.

At controllers tab, select OS & System, then supported RAID configuration:

For Data ONTAP 8.3 rc1 Using SATA or NL-SAS < 6TB drives, Maximum is 20 drives.

You probably mixed up the number 14 with maximum drives for SAS RAID4.

For Data ONTAP 8.3 rc1 Using SATA or NL-SAS >= 6TB drives, Maximum is 14 drives.

Hi Alex,

Just a quick FYI – the “ONTAP 8.2 Physical Storage Management Guide” you linked to has been updated for 8.2.1. With this update the 8.2 RAID information you quoted has been changed match the recommendation of the 8.3 quote in your post (see pages 93 & 94 of the 8.2 document you linked to):

All RAID groups in an aggregate should have a similar number of disks. The RAID groups do not have to be exactly the same size, but you should avoid having any RAID group that is less than one half the size of other RAID groups in the same aggregate when possible.

Thanks for the update Will!